Adaptive Generative UI

2025 Jun – 2026 Jan · UX Engineer Intern

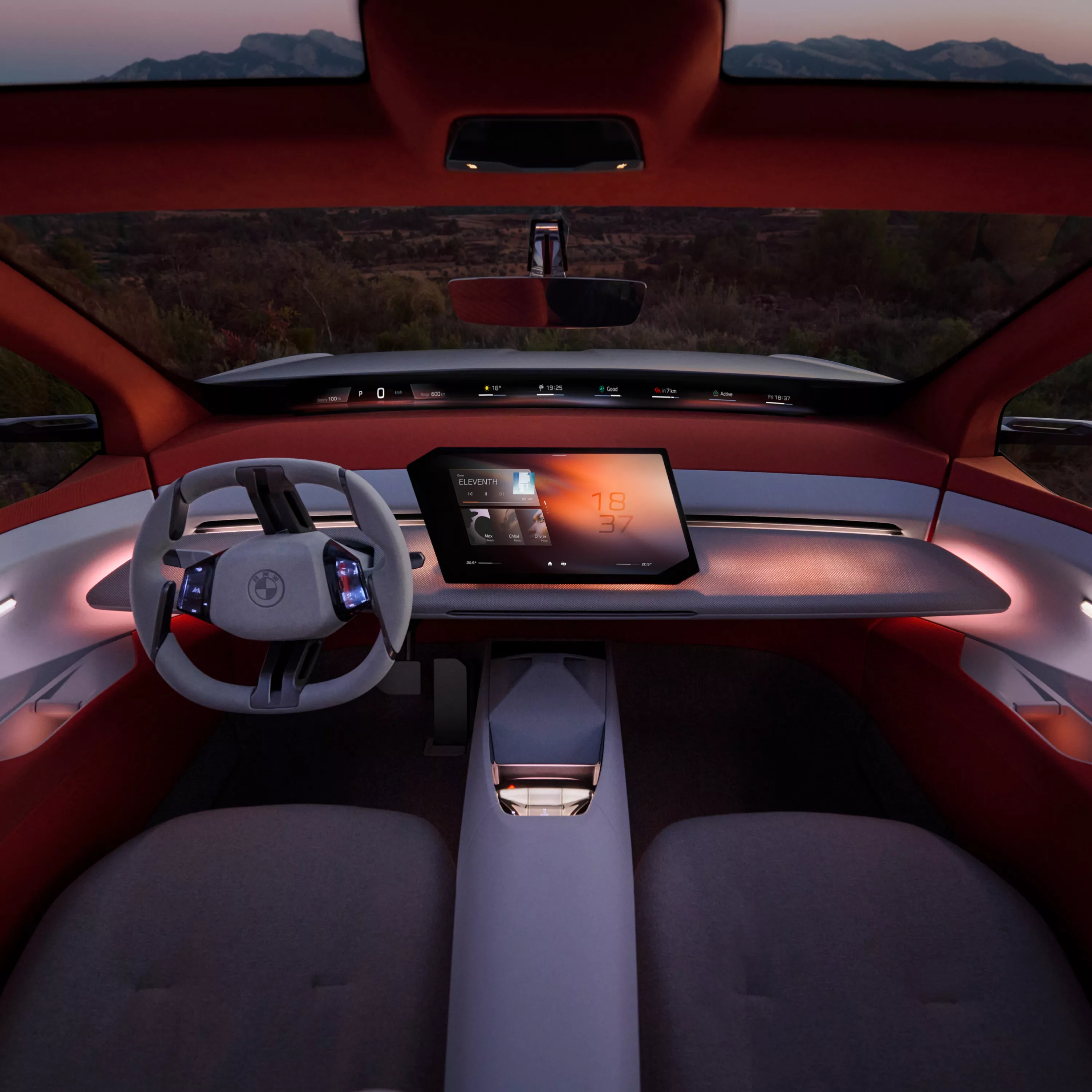

In-vehicle interfaces are static. They show the same things the same way, forcing drivers to dig for information at the exact moments road conditions, fatigue, or hazards make digging dangerous.

Design challenge: Compose the interface itself from contex. Driver state, road conditions, route - instead of asking the driver to navigate to what they need.

Developed a context-aware UI framework that utilizes generative models to synthesize interface components in real-time. By analyzing driver telemetry, cabin state, and environmental context, the system provides proactive information hierarchy, minimizing cognitive load while enhancing vehicle interaction.

- —Designed and implemented a modular HMI architecture that decouples UI layout from underlying data streams, enabling seamless adaptation to varying driver contexts.

- —Developed a React-based orchestration layer that synchronizes generative model outputs with high-fidelity vehicle displays, ensuring sub-50ms latency for interface transitions.

- —Prototyped an Adaptive HMI concept for automotive production, translating experimental generative design into a safety-critical dashboard implementation.

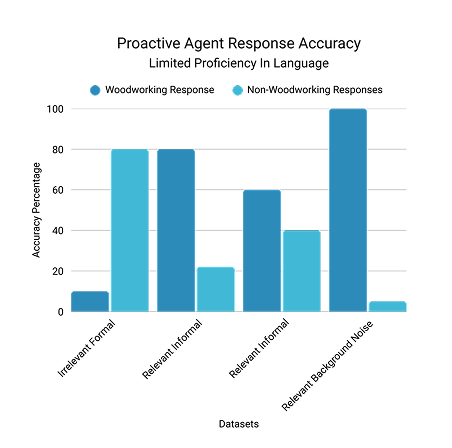

Grounded in two complementary methods: physiological measurement to capture what drivers cannot self-report, and structured usability testing to observe how they interact with dynamic interfaces.

Physiological Data Collection

Integrated a multi-sensor pipeline — PSI, PPG, heart rate, and CRM measurements — to capture driver state data that feeds directly into the UI's context gating logic. Design decisions grounded in measurable cognitive and physiological signals rather than self-reported preference alone.

Usability Testing Framework

Designed a structured testing framework to evaluate how drivers interact with dynamically generated interfaces under varying scenario conditions. Testing ran Oct–Nov, with findings directly informing iteration on the isochrone GenUI concept and real-time layout logic.

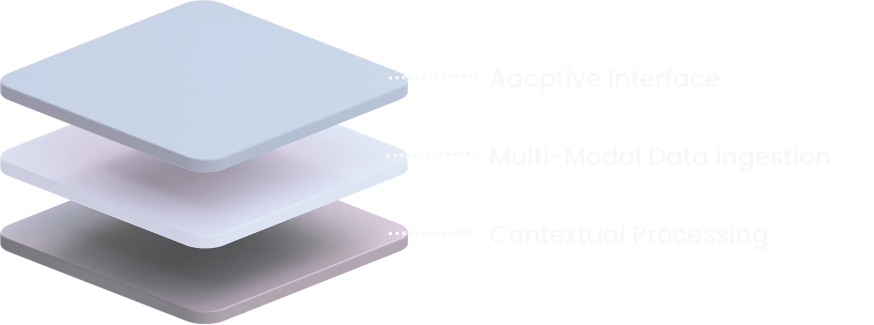

Three principles shaped every design decision across the project.

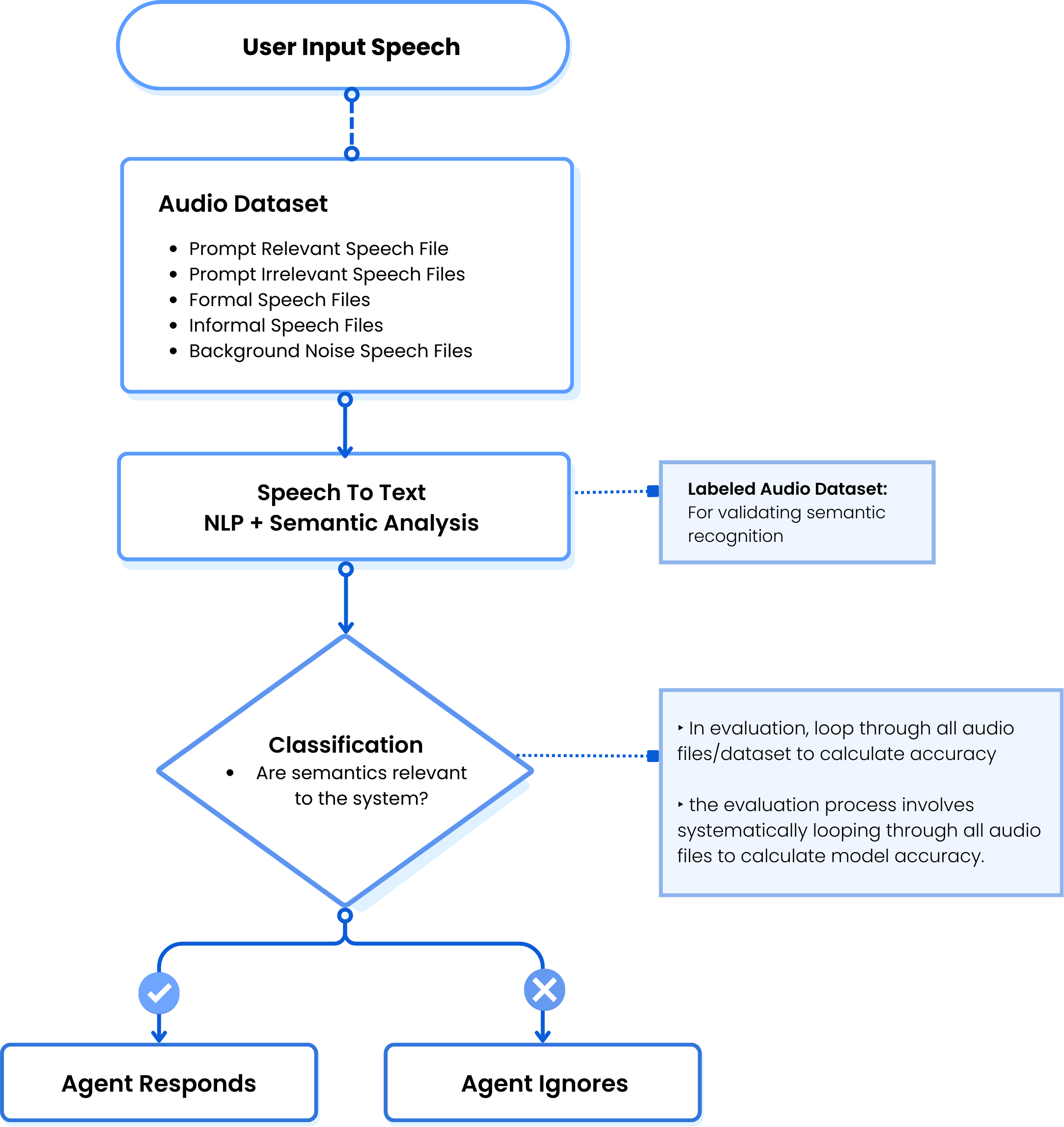

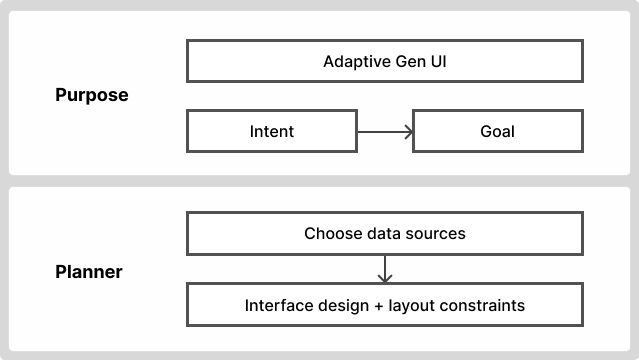

Voice and sensor input become generators of new interface components. The driver does not navigate — the interface responds.

Driver Input

Voice · Sensor · Telemetry

Intent Parsing

NLP · Context classification

UI Generation

Component synthesis · Agent selection

Layout Orchestration

Priority scoring · Glance-budget check

Rendered InterfaceHover Me

Sub-50ms · Auto-dismissed when resolved

Example

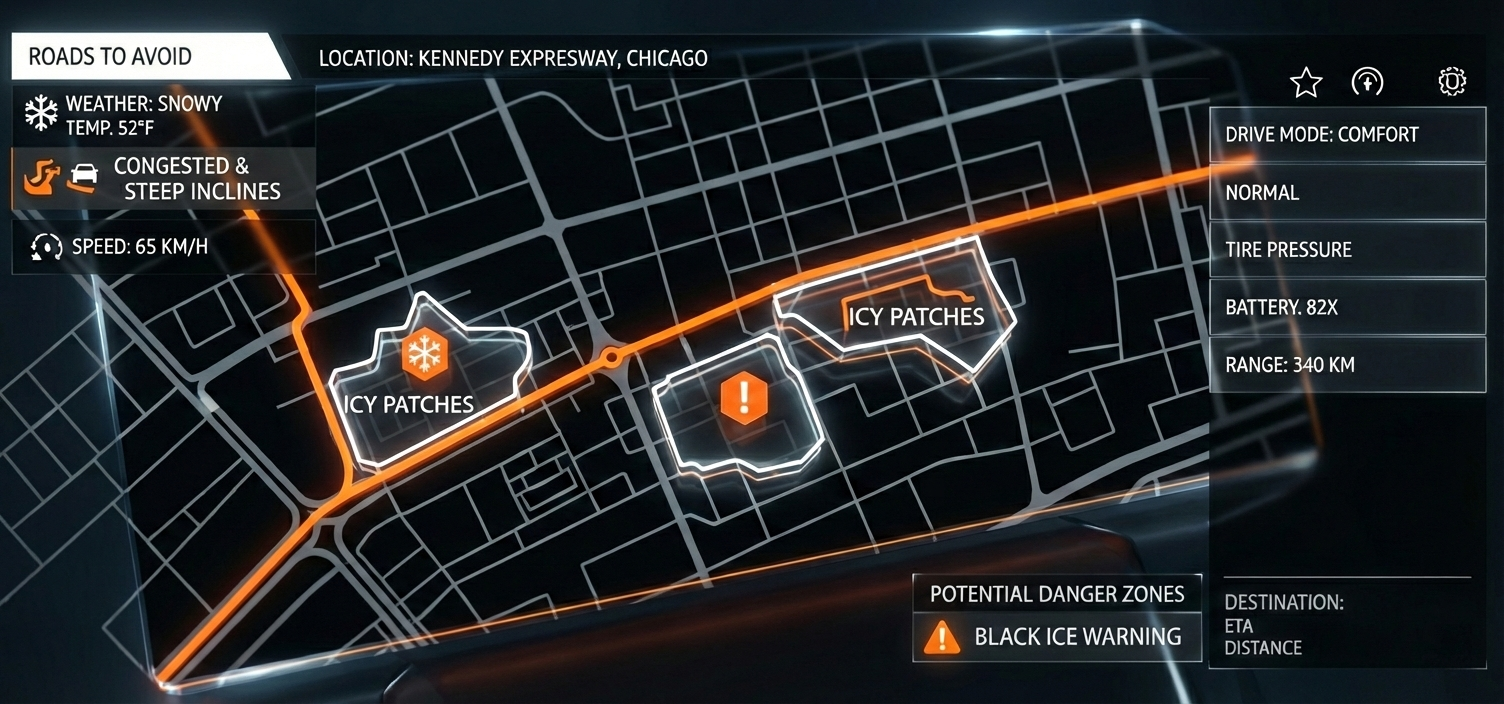

Driver says "Show me roads to avoid" during a snowstorm → system generates a contextual map overlay with icy patch markers, congestion warnings, and a black ice alert — assembled on demand, dismissed automatically when conditions clear.

Designed in Figma, validated against one primary scenario — Kennedy Expressway, Chicago, 32°F. High information density, genuine safety stakes.

Passenger Comfort Panel

Identity, dual-zone temp, seat controls, tire pressure, and range — one glanceable view. No multi-step navigation.

Danger Zone Map Overlay

Icy patch markers, congestion flags, and black ice alert. Auto-surfaces on weather trigger. Dismissed when conditions clear.

Snow Readiness Module

Tire pressure, brake wear, road focus mode, heated steering — assembled proactively. Surfaces without being asked.

Climate Ring Display

Dual-zone temp as ambient rings in the instrument cluster. Communicates state without pulling sustained attention from the road.

A five-domain agent architecture where each agent owns a discrete area of the driving experience. A central orchestrator determines priority and layout based on live context signals.